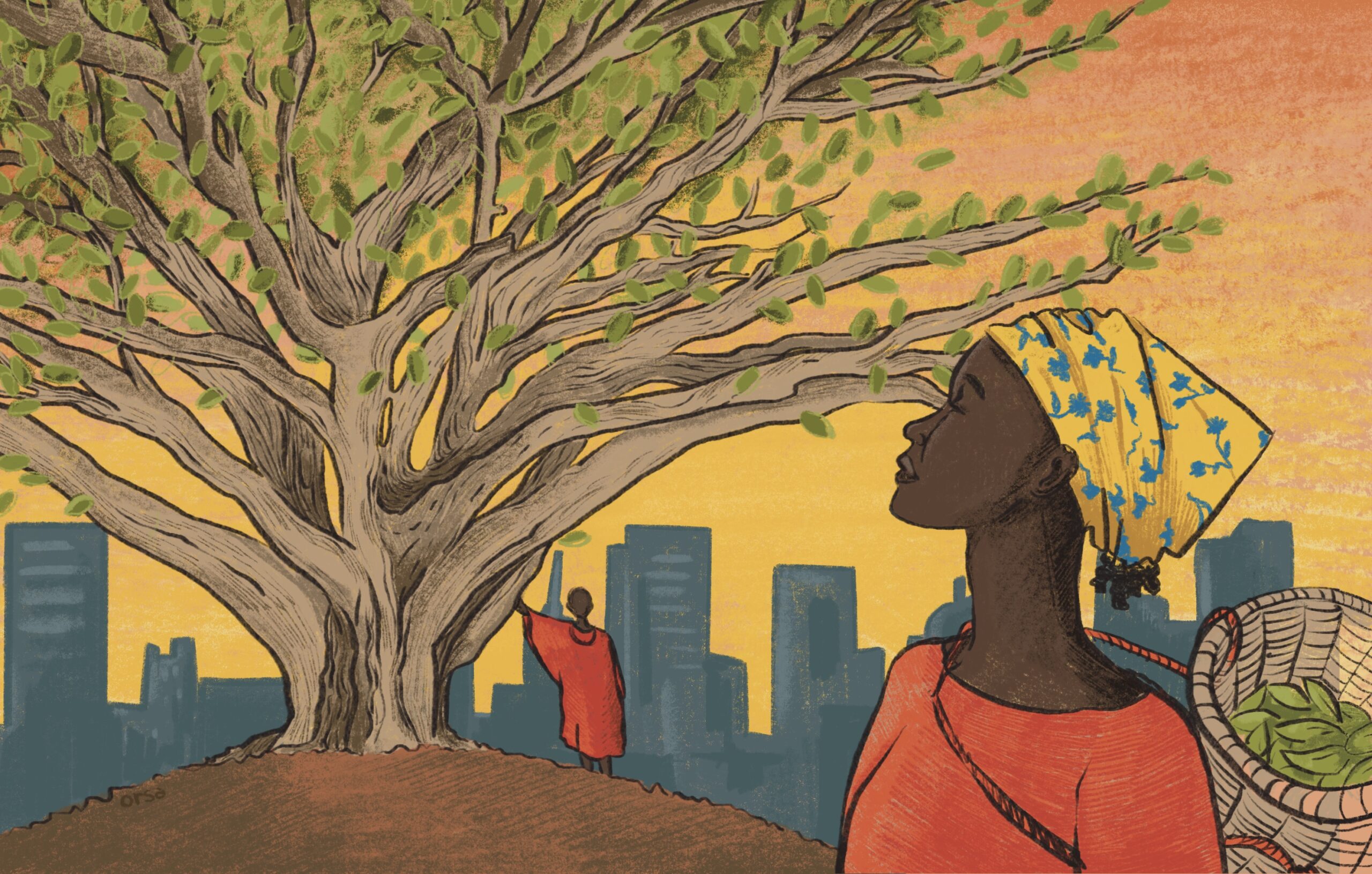

According to tradition: Kenya revives customary laws to battle ecosystem loss

Facing ecological degradation under climate change, some in Kenya are looking back on traditional indigenous knowledge that prioritizes sustainable harmony with nature over short-term profits. Now, these customary rules may become laws on paper as well.